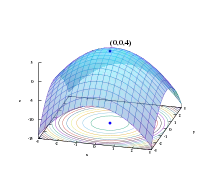

In optimization, a gradient method is an algorithm to solve problems of the form

with the search directions defined by the gradient of the function at the current point. Examples of gradient methods are the gradient descent and the conjugate gradient.

See also

- Gradient descent

- Stochastic gradient descent

- Coordinate descent

- Frank–Wolfe algorithm

- Landweber iteration

- Random coordinate descent

- Conjugate gradient method

- Derivation of the conjugate gradient method

- Nonlinear conjugate gradient method

- Biconjugate gradient method

- Biconjugate gradient stabilized method

References

- Elijah Polak (1997). Optimization : Algorithms and Consistent Approximations. Springer-Verlag. ISBN 0-387-94971-2.

This article is issued from Wikipedia. The text is licensed under Creative Commons - Attribution - Sharealike. Additional terms may apply for the media files.